New Initials, Old Issues: How Remembering Y2K Can Help Us Think about AI

New Initials, Old Issues: How Remembering Y2K Can Help Us Think about AI

When imagining the sorts of AI-related hazards that threaten the future, there is a tendency to focus on the most disastrous possibilities. There is the doomsday scenario in which AI wipes out humanity, there is the dystopian scenario in which AI gives rise to an inescapable high-tech panopticon, and there is the depressing scenario in which the combination of AI-linked layoffs and AI-generated content saps humanity of its sense of meaning. Considering the frequency with which tech executives and financiers openly discuss these scenarios, and the way politicians waffle in their responses, it is understandable why a focus on these risks seems to currently dominate tech-related discourse. And yet, might the attention to such obvious existential risks distract us from more seemingly banal, but still quite serious, dangers?

To put it another way, what are the seemingly innocuous technical standards being adopted in the present that could come back to bite us in thirty years? How does the incorporation of AI into infrastructural systems keep even individuals who swear off using AI reliant on those systems?

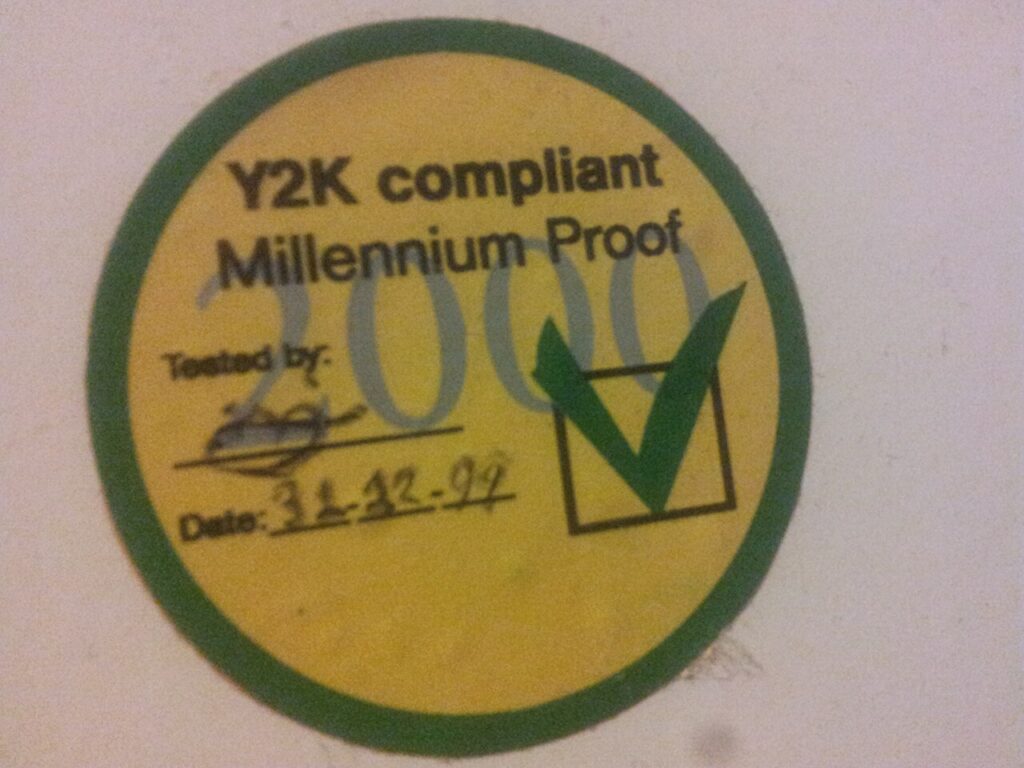

Admittedly, those are the sorts of questions raised not by the computing-related initialism driving so much consternation at the moment (i.e., AI), but they were raised by the computing-related initialism that was driving a lot of consternation just before the turn of the twenty-first century: Y2K. While there is an unfortunate habit to look back at the year 2000’s computer problem (generally referred to simply as Y2K) and shrug or laugh, a more critical engagement with that event can provide an opportunity to ask about the sorts of risks societies surround themselves with once they embrace computer technologies.[1]Zachary Loeb, “Apocalypse When,” Empty Set, no. 1 (December 30, 2025), https://www.emptysetmag.com/articles/apocalypse-when. There was a tendency at the time, and a tendency now, to think that Y2K was about computers bringing about the end of the world, but if you look beyond the hyperbolic headlines, Y2K had much more to do with confronting the risks of the computerized world.[2]Zachary Loeb, “Life’s A Glitch,” Real Life, September 01, 2022, https://reallifemag.com/lifes-a-glitch/.

That was certainly the argument President Bill Clinton made while talking about Y2K before the National Academy of Sciences, in the summer of 1998. There, Clinton reminded his audience “the typical family home today has more computer power in it than the entire MIT campus had twenty years ago.”[3]Bill Clinton, “Remarks by the President Concerning the Year 2000 Conversion,” National Academy of Sciences, July 14, 1998, https://govinfo.library.unt.edu/npr/library/news/rmkpres.html. And in expressing confidence that the problem could be fixed, Clinton noted, “if we act properly, we won’t look back on this as a headache, sort of the last failed challenge of the twentieth century. It will be the first challenge of the twenty-first century successfully met.”[4]Clinton, “Remarks on the Year 2000 Conversion.”

Y2K was a challenge that was successfully met. But based on recent reporting and polling, quite a few people are less than confident we will successfully meet the current batch of twenty-first century computing-related challenges. Indeed, you don’t have to spend long scrolling on social media before you’ll see some version of the sentiment “everyone needs to get more anti-AI now!” And while Y2K cannot reveal if AI is going to bring about the end of the world, Y2K can help us think about some of the ways AI may be reshaping the world as we know it.

An Initial Explanation of Y2K

Imagine that it is 1999 and you are trying to calculate the age of someone born in 1950. What do you do? Simple, you subtract 1950 from 1999 and get 49. And to be clear, you would also get 49 had you just subtracted 50 from 99. Now, imagine the year is 2000, and you are trying to calculate the age of that same person. What do you do? Same procedure, you subtract 1950 from 2000 and get 50 as the result. However, were you to lop off those two century digits, and subtract 50 from 00, the result would be -50.

If you are looking at that -50 and thinking to yourself, “that doesn’t make any sense, a person can’t be -50 years old,” congratulations you are smarter than a computer. But for the numerous computer systems that had been programmed to only use two digits to record years, such results represented an issue.

Alas, computers at the close of the twentieth century could not be relied upon to make sense of such errant numbers. Upon encountering them, the impacted system might carry on (dates are not essential for everything), or they could accept those numbers as accurate and, in the process, pollute databases with inaccuracies, or these questionable calculations might cause systems to fail entirely. What is vital to note is that it was next to impossible to predict which of those scenarios would befall a particular system. Thus, the year 2000 computer problem: the race to check, and where necessary fix, these systems before the countdown clock read 00.[5]Leon Kappelman, Year 2000 Problem: Strategies and Solutions from the Fortune 100 (Boston: International Thomson Computer Press, 1997), 1–5.

As for the identity of the scheming villain responsible for computers recording years this way? These nefarious figures were none other than efficiency and cost effectiveness. In the 1960s computer memory was expensive, and truncating dates was a straightforward way to save memory and therefore to save money. So long as the calculations involved the same century, this solution worked quite well. But even as the cost of computer memory decreased, and the punch cards vanished, this method of recording dates using only two digits became the standard.[6]Capers Jones, The Year 2000 Software Problem: Quantifying the Costs and Assessing the Consequences (New York: ACM Press, 1997), 19–31.

An Initialism Explanation of Y2K

The technical issue is easy to explain, but to understand why this became such a problem it is useful to consider some other sets of initials.

To the extent that truncating dates became a standard procedure it was in keeping with the development of actual standards, notably FIPS PUB 4 (Federal Information Processing Standards Publication 4) and ANSI X3.30-1971 (American National Standard X3.30-1971). Both set clear standards for the exchange of data, with both allowing for the year to be represented using only two characters. ANSI X3.30-1971 noted “the four digit year may be truncated…where the century and decade are to be implied;”[7]American National Standards Institute, Inc., American National Standard: Representation for Calendar Date and Ordinal Date for Information Interchange, ANSI X3.30-1971. while FIPS PUB 4 stated the date was to be “represented by a numeric code of six consecutive positions.”[8]US Department of Commerce, National Bureau of Standards, Federal Information Processing Standards Publication: Code for Information Interchange, Hardware Standard Interchange Codes and Media … Continue reading ANSI X3.30-1971 had noted truncating should only be used under certain circumstances, and FIPS PUB 4 noted the digit representation could “be extended to four digits when information extends to more than one century.”[9]US Department of Commerce, National Bureau of Standards, Federal Information Processing Standards Publication. However, those were qualifications, and the specification was for dates to be represented using six digits (e.g., MM/DD/YY).

Shortly after these standards went into effect, the first published warning of what would eventually be known as Y2K appeared. Commenting on these standards, Robert Bemer noted: “It should be obvious” that when it came to representing years using only two digits that “extreme caution should be exercised.”[10]R.W. Bemer, “What’s the Date?” Honeywell Computer Journal 5, no. 4 (1971): 205–208. Bemer’s use of the word “extreme” marks the only place his article could be accused of anything resembling hyperbole. Granted, in 1979, he would make the stakes clearer by warning that dropping the “first two digits for computer processing” might mean “the program may fail from ambiguity in the year 2000.”[11]R.W. Bemer, “Time and the Computer,” Interface Age Magazine 4, no. 2 (February 1979): 75.

Despite Bemer’s early attempts to sound the alarm—and there were others who echoed Bemer’s warnings—it was not until the turn of the century was only a few years away that the concern truly caught on. And once it did, it became all too easy to spotlight the most outlandish claims and the most hyperbolic media coverage, as opposed to the decidedly more sober (and knowledgeable voices) that sought to emphasize (as Clinton had noted in the aforementioned NAS address) that the problem was real, but fixable, and lots of people were working on fixing it.

Which brings us to another initialism: PDD-63 (Presidential Decision Directive 63). Its subject was “Critical Infrastructure Protection,” but what made this 1998 directive notable is how it acknowledged that the United States was “increasingly reliant” on “cyber-based information systems.”[12]Bill Clinton, Presidential Decision Directive/NSC-63, “Subject: Critical Infrastructure Protection,” May 22, 1998. Instead of celebrating the potential of these systems, PDD-63 recognized that as these “cyber-based” systems became “increasingly automated and interlinked,” they were in turn giving rise to “new vulnerabilities.”[13]Clinton, Presidential Decision Directive/NSC-63. PDD-63 was one of the results of President Clinton’s Commission on Critical Infrastructure Protection, which had been created to “assess the scope and nature of the vulnerabilities of, and threats to, critical infrastructures.”[14]Exec. Order No. 13010, 61 Fed. Reg. 138 (July 17, 1996). In its reporting on “Protecting America’s Infrastructure” that Commission had pointed to Y2K as “one well-publicized example of vulnerability associated with our dependence on computers,” which “if not corrected, has the potential to adversely affect the operation of all our infrastructures.”[15]Commission on Critical Infrastructure Protection, Critical Foundations: Protecting America’s Infrastructures (1997), 11.

And why could Y2K “affect the operation of all our infrastructures”? Because those infrastructures had by that point become bound up with computer systems.

Speaking before the NAS, Clinton had emphasized this infrastructural point by noting, “if not addressed,” Y2K might just cause “a rash of annoyances,” but he also noted, “It could affect electric power.”[16]Clinton, “Remarks on the Year 2000 Conversion.” This infrastructural emphasis was a key matter of concern for those tasked with overseeing and addressing the problem. Indeed, a few months before Clinton had created his Commission on Critical Infrastructure Protection, the US House of Representatives had held its first hearing on Y2K at which multiple witnesses had framed it as an “infrastructure issue,” with the potential to impact everything from utilities (i.e., water, gas, electricity), to telecommunications, to the financial sector. As Assistant Secretary of Defense Emmett Paige Jr. testified: “As a society, we in this country have become dependent on computers.”[17]Is January 1, 2000, the Date for Computer Disaster?2nd sess. Before Subcomm. on Government Management, Information, and Technology, 104th Cong. (1996), 78. That dependency—as was made clear across dozens of congressional hearings and numerous government reports—had much less to do with the way any particular individual used a computer in their daily life, and much more to do with the way that an uncountable number of unseen computer systems were working in the background to make daily life possible.

In its initial report, the Senate Special Committee on the Year 2000 Technology Problem drew upon expert testimony and its own investigative work and observed that Y2K was providing “an opportunity to educate ourselves firsthand about the nature of twenty-first century threats.”[18]Special Comm. on the Year 2000 Technology Problem, Investigating the Impact of the Year 2000 Problem, S. Prt. 106-10, at 12 (1999). Furthermore, “the nature” of these threats was closely connected to an only deepening dependency on computer systems. And for a sense of a significant place such threats could be found, look no further than the subject of the Special Committee’s very first hearing: “Utilities and the National Power Grid.”[19]Utilities and the National Power Grid,2nd sess. Before Special Comm. on the Year 2000 Technology Problem, 105th Cong., (1998).

From Three Initials to Two

A quarter of the way into the twenty-first century, Y2K seems to be enjoying something of a resurgence. Albeit one that has more to do with aesthetics than with the computing problem; besides there are new computing related initials currently driving public concern.

Yet, those seeking to make sense of the dangers presented by AI would be wise to look back at Y2K—the technical problem not the baggy jeans—but in doing so the key challenge is to avoid getting distracted by references to the Book of Revelation and instead pay attention to the things Y2K revealed. Here, it can be useful to keep initials other than Y2K in mind. ANSI X3.30-1971 and FIPS PUB 4 both attest to the ways that serious problems can emerge from standards that are adopted in the name of efficiency and cost savings. Meanwhile, PDD-63 serves as an important reminder that the danger of Y2K was not that it was going to crash someone’s AOL Instant Messenger chat just as they were about to ask out their crush but that computers had become critical infrastructure and critical to infrastructure.

In other words, when considering what risks AI may pose, instead of focusing on End of Days scenarios, it’s worth paying attention to the way AI (in the name of efficiency and cost savings) is being incorporated into daily procedures. Amidst all the understandable concern with how AI’s demands weigh heavily on already existing critical infrastructures, it is also worth considering what may happen as AI becomes interwoven with those critical infrastructures. While a fair amount of attention has been devoted to AI’s infrastructural impacts in utility areas like water and energy,[20]James O’Donnell and Casey Crownhart, “We Did the Math on AI’s Energy Footprint. Here’s the Story You Haven’t Heard,” MIT Technology Review, May 20, 2025, … Continue reading it is also being incorporated in the financial sector,[21]“Inside the Bank: How Artificial Intelligence is Changing Banking Operations,” Innovation at Work (blog), MIT Sloan School of Management, April 2, 2026, … Continue reading telecommunications, as well as in the oil and gas industries.

In the 1990s Y2K was understood to be a risk because of how computer systems were intertwined with utilities, government services, general business, financial services, transportation, telecommunication, healthcare, and much else. If one of those computer systems failed, they might cause significant issues in other areas. And at the present moment, AI is either already intertwined, or becoming intertwined, with all those same systems.

Y2K demonstrated that, by the close of the twentieth century, even those who did not consider themselves regular computer users were dependent on them (and on choices made by those who had built those systems). And it may well be that a quarter of the way into the twenty-first century, even those who swear off the use of AI are becoming dependent on those systems (and on choices being made by those building those systems).

Opining on “Computers and our Society,” in 1974—only a few years after directing a wary warning at Y2K—Bemer observed, “we are at the mercy of a system that must be fully operational.”[22]R.W. Bemer, “Computers and Our Society,” Jurimetrics Journal 15, no. 1 (Fall 1974): 51, https://www.jstor.org/stable/29761454. Twenty-five years later that warning proved prescient, and more than twenty-five years after that this warning still feels unnervingly apt. Considering this danger—of being at the mercy of computer systems—Bemer wondered, “Perhaps there may come a day when the US augments its Environmental Protection Agency with a Human Protection Agency.”[23]Bemer, “Computers and Our Society,” 50.

Perhaps that day is coming soon.

Footnotes

| ↑1 | Zachary Loeb, “Apocalypse When,” Empty Set, no. 1 (December 30, 2025), https://www.emptysetmag.com/articles/apocalypse-when. |

|---|---|

| ↑2 | Zachary Loeb, “Life’s A Glitch,” Real Life, September 01, 2022, https://reallifemag.com/lifes-a-glitch/. |

| ↑3 | Bill Clinton, “Remarks by the President Concerning the Year 2000 Conversion,” National Academy of Sciences, July 14, 1998, https://govinfo.library.unt.edu/npr/library/news/rmkpres.html. |

| ↑4 | Clinton, “Remarks on the Year 2000 Conversion.” |

| ↑5 | Leon Kappelman, Year 2000 Problem: Strategies and Solutions from the Fortune 100 (Boston: International Thomson Computer Press, 1997), 1–5. |

| ↑6 | Capers Jones, The Year 2000 Software Problem: Quantifying the Costs and Assessing the Consequences (New York: ACM Press, 1997), 19–31. |

| ↑7 | American National Standards Institute, Inc., American National Standard: Representation for Calendar Date and Ordinal Date for Information Interchange, ANSI X3.30-1971. |

| ↑8 | US Department of Commerce, National Bureau of Standards, Federal Information Processing Standards Publication: Code for Information Interchange, Hardware Standard Interchange Codes and Media (November 1, 1968). |

| ↑9 | US Department of Commerce, National Bureau of Standards, Federal Information Processing Standards Publication. |

| ↑10 | R.W. Bemer, “What’s the Date?” Honeywell Computer Journal 5, no. 4 (1971): 205–208. |

| ↑11 | R.W. Bemer, “Time and the Computer,” Interface Age Magazine 4, no. 2 (February 1979): 75. |

| ↑12 | Bill Clinton, Presidential Decision Directive/NSC-63, “Subject: Critical Infrastructure Protection,” May 22, 1998. |

| ↑13 | Clinton, Presidential Decision Directive/NSC-63. |

| ↑14 | Exec. Order No. 13010, 61 Fed. Reg. 138 (July 17, 1996). |

| ↑15 | Commission on Critical Infrastructure Protection, Critical Foundations: Protecting America’s Infrastructures (1997), 11. |

| ↑16 | Clinton, “Remarks on the Year 2000 Conversion.” |

| ↑17 | Is January 1, 2000, the Date for Computer Disaster?2nd sess. Before Subcomm. on Government Management, Information, and Technology, 104th Cong. (1996), 78. |

| ↑18 | Special Comm. on the Year 2000 Technology Problem, Investigating the Impact of the Year 2000 Problem, S. Prt. 106-10, at 12 (1999). |

| ↑19 | Utilities and the National Power Grid,2nd sess. Before Special Comm. on the Year 2000 Technology Problem, 105th Cong., (1998). |

| ↑20 | James O’Donnell and Casey Crownhart, “We Did the Math on AI’s Energy Footprint. Here’s the Story You Haven’t Heard,” MIT Technology Review, May 20, 2025, https://www.technologyreview.com/2025/05/20/1116327/ai-energy-usage-climate-footprint-big-tech/. |

| ↑21 | “Inside the Bank: How Artificial Intelligence is Changing Banking Operations,” Innovation at Work (blog), MIT Sloan School of Management, April 2, 2026, https://executive.mit.edu/inside-the-bank-how-artificial-intelligence-is-changing-banking-operations.html. |

| ↑22 | R.W. Bemer, “Computers and Our Society,” Jurimetrics Journal 15, no. 1 (Fall 1974): 51, https://www.jstor.org/stable/29761454. |

| ↑23 | Bemer, “Computers and Our Society,” 50. |