Understanding Robotics Research and Education: A Conversation with Odest Chadwicke Jenkins

Understanding Robotics Research and Education: A Conversation with Odest Chadwicke Jenkins

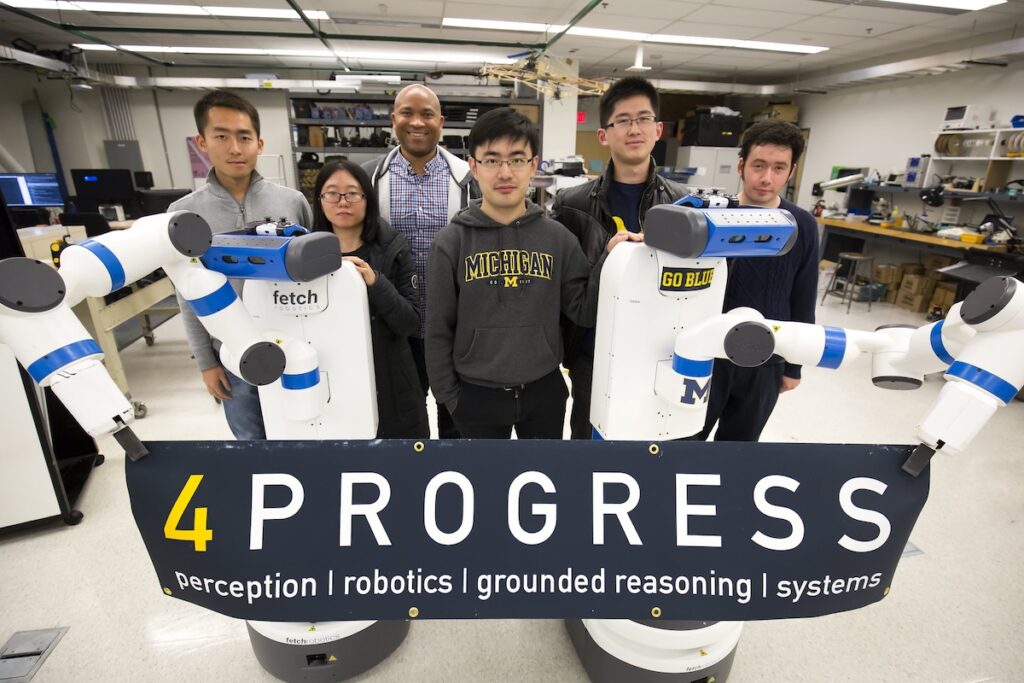

As the development and use of robots and artificial intelligence (AI) proliferate, some researchers are studying how to best make human-robot interactions more natural for users while considering the varied implications of these technologies on society. Following a visit to the University of Michigan’s Laboratory for Progress (Perceptive Robotics and Grounded REasoning SystemS), Jay Cunningham, 2023 Just Tech Fellow and assistant professor of human computer interaction at DePaul University, interviewed Odest Chadwicke “Chad” Jenkins, professor of robotics and electrical engineering and computer science at the University of Michigan, about his research and thoughts on robotics and AI, the role ethics play in novel technologies, and educating and mentoring the new generation of technologists.

Jay Cunningham (JC): I’m so happy to be back with you, I’m still on a high from my visit. I had a chance to hang out and meet some folks, and of course, learn about your work. Though we had a chance to chat a bit then, I wanted to start off by asking how you first found your way into robotics and computer science? What has stayed constant or consistent in your curiosity about the field?

Odest Chadwicke Jenkins (OCJ): Thanks, Jay. It’s a pleasure to be here, and I’m admirer of all the great work you do as well. All of it started with the Atari 2600. People probably don’t even know what that is anymore, but it was the first home video game console. It came out in the ’70s. I got mine on Christmas Day 1981, which was life changing.

I was used to just watching TV. But when I got the Atari, I could connect it to the TV. I could actually interact with those images and play games—Space Invaders, Asteroids, Breakout. I really wanted to make my own games, but at the time, I had no idea what an electrical engineer or a computer scientist was or how that machine worked, which was in effect a computer. I never thought, as I was growing up, that I’d be smart enough to make games for an Atari.

But for some reason, I decided to try computer science anyway. And I loved it. Going through undergraduate, I understood what it takes to make a game. I went to Georgia Tech for my master’s degree and learned all about 3D computer graphics. It turns out that what I really enjoyed was not necessarily the making of the games; it was the technology. It was the innovation. Everything that you need to know to make a video game for a virtual environment, you could also use to make a real robot work.

I was very fortunate to work with people at Georgia Tech, such as Professor Jessica Hodgins, and Professor Nancy Pollard. They put me onto robotics. They said, “You’re doing all this great stuff with games and computer graphics. You could do that with robotics.” So, I did. They helped me get my PhD with Maja Matarić at the University of Southern California. I started working with her on humanoid robotics, and it just took off from there. I was fortunate to have great mentors and good inspiration along my way.

JC: That’s really, really inspiring to hear, and resonates with me. Did you know anyone growing up that was a game designer or an engineer or a roboticist?

OCJ: Absolutely not. Not even close. All my family’s from the Golden Triangle of East Texas. I am the only scientist in my family. My mom worked in higher education, but other than that, most of my family has no idea what I do. Especially in the ’70s, ’80s, early ’90s, it’s hard to find people that did this kind of work. So, I had to make my own way.

JC: Even to this day, I was the first Black man to graduate from my program. That was in 2020 something, right? So, it’s still a recurring thing. If you had to explain the through line of your work to someone who’s never taken a computer science or robotics class before, what’s the simplest way you would share that?

OCJ: Basically, robots are going to be a part of your life. Increasingly, you’re seeing autonomous cars, humanoid robots, drones, all different types of delivery robots. What we want to be able to do is allow you, as somebody who doesn’t code or doesn’t do anything with computer science, to be able to program robots to do what you want in a natural way, similar to how we would instruct another person to do something new. We want you to be able to teach robots by just demonstrating things to them and having them learn from that demonstration.

JC: When you would say that some of your work involves making human robot interactions more natural, but also for robots to perpetuate human behaviors or actions, what does that mean? Can you tell us about why this form of learning or programming of robots is important?

OCJ: Before I get into that, I should say that in order to make this whole ecosystem of robotics and AI grow, we need knowledge from all across the university. This isn’t just a computer science or an engineering thing. We need people who understand sociology, psychology, economics, the legal implications, the historical contexts. This brings the whole of the university, the whole of the academy to this problem. Mine is the computational part. How do I take data from what the robot is seeing of what you’re demonstrating and then turn that into something that the robot could do on its own?

Now, the hard part is not the technical part of having robots learn from data. We’re getting very good at that. Not perfect, but very good. But will people accept it? Will people be able to think it’s natural? Will it do the things that you want it to do? This is where we work with psychologists and researchers that understand human factors. We work together to teach robots to learn in a way that’s acceptable to people, so they’ll actually want to work with these robots. I think that’s a little bit of it. One more thing I’ll say about this is that, as a roboticist, I have to be an amateur labor economist as well.

Because the first question you get as a roboticist is, “Are you building Terminator and Skynet?” I’m like, “Okay, no, no, no, we’re not doing that.” The next question is, “Are robots coming to take my job?” I think we have to be very mindful of what we’re doing. We want to be able to teach robots how to perform tasks. There are certain things that robots are going to be good at, certain things that people are going to be good at, and we want to find the right balance. We can both improve our productivity and our quality of life together. That’s one of the major challenges that we study, not just the algorithms and the computer science, not just will it work for people, but also the larger societal implications.

JC: Langdon Winner discusses the circumstances in which technology in itself has political qualities. It depends on the social, the economic, the political contexts in which they exist.[1]“Do Artifacts Have Politics?” Daedalus 109, no. 1 (1980): 121–136, https://www.jstor.org/stable/20024652. To your point, many consumers, especially those who have seen robots, associate them with industrialization, but that’s different from what we presume to be consumer-based robots, things that people can have in their homes and systems that can be companions.

I think it presents a new set of social challenges and phenomena, but I take it the same way some of us have been studying AI, agentic AI. What happens when you empower agentic AI systems with robots? Robots are able to synthesize, respond back, and learn from the environment, not just from a preset program. We create much more human-like robot features, right?

OCJ: Absolutely. In my lifetime, I went from things that just seemed like science fiction that you would see on Star Trek or in cartoons, to that being everyday technology. I carry in my pocket my smartphone, which has more computing power than we had in the biggest computers sixty years ago. I can regularly have a video call with people like we’re having right now, which seemed mind-blowing then. When you’re seeing that growth in terms of how technology has evolved from when I was a kid in the ’80s to what you’re seeing now in 2025. You’re going to see that same growth for robotics and AI.

However, there are some major issues that we have yet to figure out with AI systems. If you’re following what AI does, AI tends to change drastically every fifteen years or so. So, there may be some model or idea that you’re not thinking about right now that will become the mainstream in a couple of years.

JC: You’re hitting on something that I wanted to bring up next: ethics and the future of robotics. Based on what you just said, where do you see the most significant risks in developing robotics in AI today and the implications it can have for the future?

OCJ: The biggest issue we have with modern artificial intelligence and its application to robotics is that we just don’t know when the AI is right or when it’s wrong. It’s right most of the time. It gives us something that seems plausible, seems like it’s a good idea. If it’s wrong, it doesn’t necessarily tell us it’s wrong. It just says with high confidence, “Here’s the answer.” That’s a big issue for broader use. When you’re asking it to help you write a letter, the repercussions are not that great.

However, when you’re talking about tasks for a robot that has a lot of force and power and may be around people, now you’re talking about potential real damage that can be done in the physical world. So, we have to be that much more careful about the models used on real robots simply because of the potential harm and the cost involved. Figuring out how to know when is AI right and how do I know if it’s right will drive the next generation of AI research.

JC: Something you and I both had some similar overlapping interest in was ethics, especially in human-robot interaction.

How do people want to live and work with robots? This question wasn’t necessarily a part of applied robotics, but it’s significant to understand the social implications of robotics. A lot of these questions extend from sociology. What makes us as people feel comfortable with one another and how we oftentimes try to replicate that type of interaction with robotics in humans. It’s a challenge that’s not so easily done.

OCJ: I think what you’re seeing—and it’s the same with computing—is that you have to bring all our body of knowledge as a society, as humanity, to bear when you’re thinking about technology, its implications, and how it’s used across society. The same is going to be true with AI, the same is going to be true with robotics. I would love to be able to say that I could give you good answers about social robotics and about the way people are going to accept it and the ethics of it. I am an amateur ethicist at best.

In fact, when we were thinking about making a robotics major, I asked my good colleague and Human-Robot Interaction researcher, Holly Yanco, who’s now at UMass Amherst, “Holly, what do you think about making a robotics major?” She said, “Well, what’s the best major to be a roboticist?” I was like, “Huh,” What is the best major to be a roboticist?

JC: Mechanical engineering.

OCJ: Mechanical engineering? Yeah, that’s a good one. The actual answer is you need to be a quadruple major in mechanical engineering, electrical engineering, computer science, and math with a minor in psychology. As trained engineers, trained mathematicians, we get very little training in psychology, ethics, and philosophy, and we don’t always have time to fit it in. That’s why we need good collaborations. We have to be multidisciplinary in how we work.

So, instead of trying to be an ethicist myself, I try to work with ethicists who can help me understand and address those problems together. The thing I worry about in robotics is that we may be following computer science: We developed all this computing technology, but we didn’t think about the implications. We didn’t think about security. We didn’t think about privacy. We just made it.

JC: And retroactively go back and try to fix things.

You talked about how you created the robotics major. You’re absolutely right about how ethics, especially in technical spaces, is not often perpetuated. Most of us weren’t trained in this space. I think for many of us, this idea of critical computing has emerged from teaching ethics and criticality alongside computing and engineering.

So, as an educator, how are you thinking about ethics and robotics education? When you’re trying to train the next generation of roboticists, what principles or values do you emphasize to help your students think critically about the social impacts of their work? Do we need to have more of that happening in robotics fields?

OCJ: In the design of the robotics major, which I led—there’s a great paper on arXiv[2]Odest Chadwicke Jenkins et al., “The Michigan Robotics Undergraduate Curriculum: Defining the Discipline of Robotics for Equity and Excellence,” preprint, arXiv, August 14, 2023, … Continue reading about it for those interested in a detailed look at the design of the major—one of the things that we were able to do that I really take a lot of pride in is that we were able to put a Human-Robot Systems class in the third semester in the sophomore year of robotics majors. The tagline of the class is “how to work with people to design robots for people.” That is being able to engage with people on a team, working with stakeholders, which we oftentimes don’t talk about, or we leave until the last year of a computer science program.

Talking with the people who are going to be using this technology, people who are going to be impacted by the technology is important as well as understanding the use case for what you want to do and then also being able to understand the implications of that technology. So, the process roughly is you work with stakeholders to understand their needs and potential solutions. You work with a team to develop a technological solution, and then consider the implications: What could this look like, and what are you testing for? How are you going to evaluate its effectiveness, its potential harms, and its potential benefits?

I’m proud that we were able to raise these questions earlier in the curriculum to help guide how students think about more advanced technical concepts. When our students are implementing particle filters and forward kinematics and going through mechatronics, we are encouraging them to really think through a project, its stakeholders, and its implications as opposed to pushing it off until the end of their studies. So, I think we do have to move a lot of that professionalism, ethics, project management, and use case analysis earlier on in the curriculum.

JC: One of the things that you’re also touching on is this idea of not being so fixated on technosolutionism. We are in the business of solving problems. We just solve them technically, but it doesn’t mean that every problem must have a technical solution or need our solutions. It means fully understanding where problems and outliers exist.

But some people, especially when it comes to technical artifacts, get excited about building the thing. But it may not have value, or the thing doesn’t do what is needed, which can pose all sorts of other challenges and issues as well.

OCJ: You make the exact point as our Human-Robot Systems class. The most advanced technical solution to a problem may not be the best solution. Sometimes something very simple could be the right thing to do. Knowing when to use the technology or when to go with something different—maybe it’s a social system, maybe it’s some mechanism for how people work together, maybe it’s a procedural change—and understanding that larger set of solutions is important, because you could spend a lot of money developing a sophisticated technological solution that wasn’t the right thing. Then all that money is wasted. Getting computer scientists and engineers to appreciate the human dimensions of their work, beyond coding, can be an uphill climb.

JC: Exactly. They have a lot of pride for what they do.

OCJ: Rightfully so, but somebody should have said, “Maybe not.”

JC: Yeah, exactly. These are great values. I want to pivot here to talk about your teaching and mentoring. When I was there at Michigan, I had a chance to meet two of your students, check out your lab, walk around, and see robots. Can you tell me a little bit more about what it means for you to be a mentor in the robotics space? How has that journey been for you and what keeps you motivated?

OCJ: That’s a fabulous question because it gets to the core of what we do in higher education. Sometimes people want to make higher education more like a company, but companies at the end of the day are about producing products and profit. That’s not what we do. In higher education, we produce people and ideas. We train people about the ideas that we know about today. We teach them how to put the ideas of our discipline into practice through an undergraduate major.

A lot of the same things we cover across majors could be applied broadly. It’s just a lens through which to see the body of knowledge. We also teach students how to extend those ideas to new knowledge, which gets to our mission of doing research and scientific exploration. If I do a good job in the classroom, then students can come to the research lab and we can say, “We studied this in class, but you know what? Maybe there’s a better way to do this.” We could think about new ways of doing kinematics or autonomous planning. That essentially helps us expand what we can do as a society, as humanity.

It’s not necessarily the products that we produce, it’s not our publications, it’s not even the startup companies that come out, but it’s the people that we’re producing, the training that we’re giving them. This is the most important thing that we do. We are educating future leaders, future contributors to the workforce, future innovators. When I reflect on all the people I’ve had in class and all the great things that they’re doing, that’s what warms my heart and keeps me moving forward.

JC: That’s awesome. I heard a bunch of your stories during my trip to Michigan, but it’s good to hear this even more. Well, you’ve shared already a lot about your research, your work, your teaching, but we’re curious on what’s feeding your imagination right now. What are you currently working on?

OCJ: There’s one thing that I’m spending a lot of time on. This follows from what we talked about on the purpose of higher education and thinking about students. I’m working on establishing what we call Distributed Teaching Collaboratives in robotics and AI. What the idea of a Distributed Teaching Collaborative is, is faculty coming together from across different universities to codevelop courses in an open-source manner.

Imagine a GitHub for college classes, and then release those materials open source and use those materials to better teach classes. I don’t think people realize how much effort it takes to run a class in modern higher education. There are so many systems that we use: Canvas, Google Drive, YouTube, Keynote, PowerPoint, Gradescope, PrairieLearn. We haven’t even talked about email and student Q&A through systems such as Piazza, Slack, Discord, and other myriad options.

We always want to make sure that we address accommodations for students’ needs in the classroom as well. That’s an important aspect of accessibility, but it’s a lot for a faculty member to run. At Michigan, I’m fortunate because I focus on research. I teach one class a semester, but my colleagues at teaching focused schools, at minority serving institutions, at historically Black colleges and universities, have teaching loads of three or four courses each semester, and sometimes up to five courses a semester.

If you have to teach that many courses and it takes this much effort, that takes away from your time for developing new courses in robotics and AI or being able to engage in research. But, if we can build this Distributed Teaching Collaborative for these courses, then students who come through those classes would probably be the students I’d love to recruit for graduate school as well as build a good relationship with other universities. So, in this era where we must rethink higher education into something that’s more affordable, more effective, and more efficient, Distributed Teaching Collaboratives offers a model with a lot of positive potential.

JC: Would students have to be enrolled in an institution to find these courses, is it just for educators, or could this be some type of learning forum where these courses or the material could be available for students to learn?

OCJ: I think everything that we teach in class these days is already online. Everything that I talk about, you could find on Wikipedia. But when you read it on Wikipedia, it may not be the easiest to understand. Everybody learns in different ways. While all our course concepts are on the internet, are you sure you are getting good information from good sources? What we’re doing as faculty is packaging existing knowledge and conveying it to students in a manner that helps them learn. Even if we do a great job of teaching through lectures and online material, a lot of the learning happens during office hours, one-on-one, or one in a small group.

So, we can never really give up that personal touch that comes with an instructor working with students directly. Even if the material’s available for a student, it really helps to have a teacher, somebody who’s guiding you through the material and showing you what you need to know. So, we’re really thinking about the instructors in this case. If we can help instructors be more effective, they can help students be more effective, and those students can have better outcomes and more opportunities.

JC: As someone who teaches a lot, that’s something that I would really benefit from as well, especially being an early-career faculty member and researcher. My last question for you is, are there any younger scholars or emerging researchers whose work you’d want to lift up for our readers to know about or whose work you currently admire? Who should we be on the lookout for? You want to name-drop anybody?

OCJ: Chris Crawford, who was young when I was a faculty member, now establishing himself. But I think the real answer is that there’s so much good work out there, which is unlike when I came up. It is now massive. I can’t pick somebody out and say, “Oh, that person, that’s the hot person.” There are so many and it’s now more global. You’re seeing more access in places that didn’t have access before. You’re probably going to see growth in South America and Africa, Eastern Europe, areas of Southeast Asia that are now building up a research presence.

You’re seeing more people engage in research around the country who didn’t or couldn’t when I was coming up. It was less diverse and now it’s getting more diverse. But I think the one thing that I would hit home on to underscore, emphasize, highlight, underline is that, in order for this to be successful, the country has to be willing to invest in the scientific enterprise. What made a lot of this possible were the early investments that happened post–World War II by people like Vannevar Bush that gave us this fabulous engine of innovation and science and technology. It costs money to do it, but the return on investment is huge.

So, I would encourage my fellow citizens to invest in our future and invest in a diverse and excellent body of scholars who are going to help us shape the future.

This interview has been edited for length and clarity.

Footnotes

| ↑1 | “Do Artifacts Have Politics?” Daedalus 109, no. 1 (1980): 121–136, https://www.jstor.org/stable/20024652. |

|---|---|

| ↑2 | Odest Chadwicke Jenkins et al., “The Michigan Robotics Undergraduate Curriculum: Defining the Discipline of Robotics for Equity and Excellence,” preprint, arXiv, August 14, 2023, https://doi.org/10.48550/arXiv.2308.06905. |